AI in Cybersecurity: How It Works, Core Use Cases, and Deployment Framework

Your security team just flagged 4,200 alerts in a single day. The analysts face challenges because they need to handle too much work. Half the alerts were not real threats and still require further investigation. The actual security breach which occurred three hours ago remains hidden among the background noise. This reality represents the current state of enterprise security operations in contemporary business environments. The implementation of AI technology in cybersecurity alters existing operational methods.

Organizations using AI-powered threat detection reduce mean time to detect (MTTD) by 74% and cut breach costs by an average of $3 million per incident, according to IBM’s Cost of a Data Breach Report 2023. The safety of businesses depends on AI systems which operate as essential cybersecurity components.

What Is AI in Cybersecurity? Architecture, Algorithms, and Security Intelligence

The field of AI in cybersecurity uses machine learning, deep learning, and natural language processing for threat detection and response automation which protects digital assets. As a result, the system shifts from a reactive security mode to a predictive one.

The Three-Layer Architecture

An AI security architecture consists of three basic components which build its system. The data ingestion layer collects logs, packets, endpoint signals, and user behavior. Next, the intelligence layer uses ML models to identify patterns and detect anomalies. Finally, the system operates through the action layer which starts automatic operations or informs human analysts about the situation.

Core Algorithms

The core algorithms are actually quite simple in concept. Supervised learning helps classify malware. Unsupervised clustering detects unusual behaviour. Reinforcement learning adapts responses based on evolving threats. Together, they create systems that don’t just react, they learn.

Enterprise platforms like Microsoft Azure Sentinel, IBM QRadar, and Splunk SIEM integrate these models at scale, allowing security intelligence to operate continuously across complex environments.

How AI in Cybersecurity Works: Machine Learning Models, Behavioral Analytics, and Threat Detection Pipelines

Traditional signature-based systems only catch known threats. Modern AI security systems operate through a different method. The system establishes a normal behavior pattern for all users and devices and applications which it then monitors for any real-time behavioral changes.

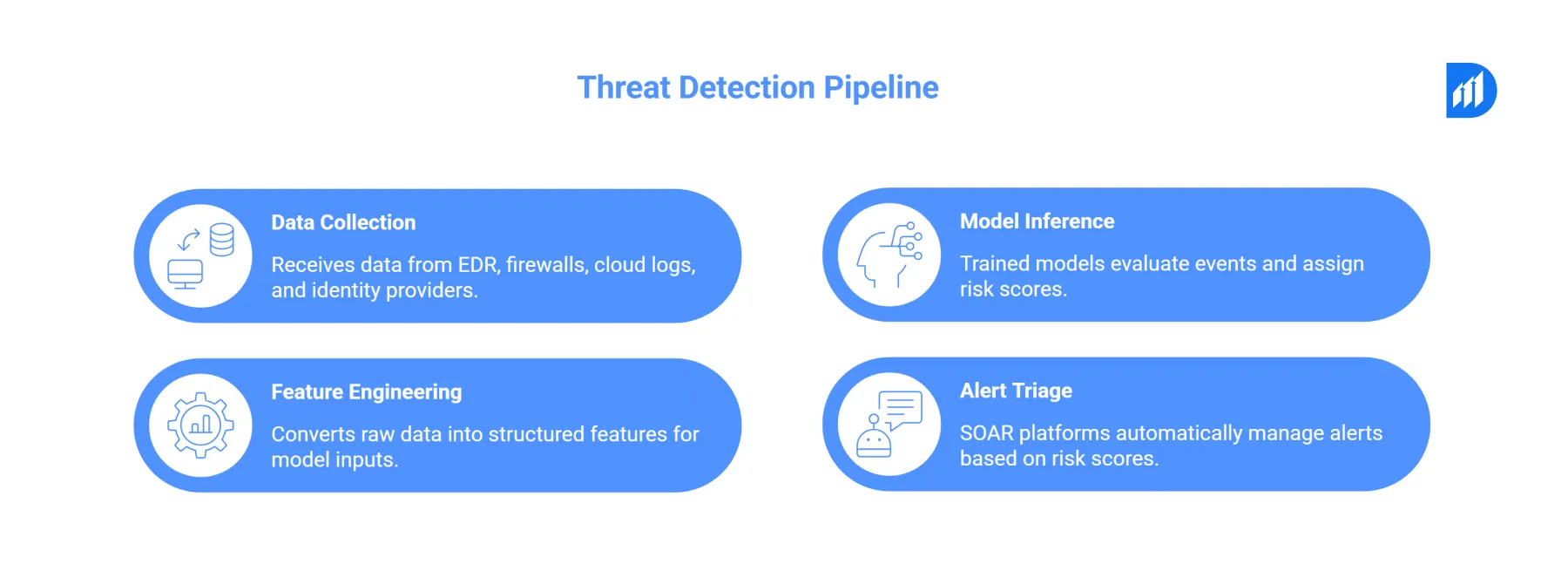

The Threat Detection Pipeline

The threat detection pipeline works like this:

- Data collection: The system receives data from multiple sources which include Endpoint Detection and Response (EDR) tools and firewalls and cloud logs and identity providers through a central stream which uses Apache Kafka or Azure Event Hubs as its main transport method.

- Feature engineering: The system converts raw data into structured features. The system uses login time and geolocation and requests frequency and data transfer size to create model inputs.

- Model inference: The trained models evaluate every event through scoring. A login from a new country at 3 AM scores high risk. In contrast, the system rates a routine batch job as low risk.

- Alert triage: The SOAR platforms Palo Alto XSOAR and Microsoft Sentinel automatically manage alerts through risk score-based triage which decreases the work responsibility of analysts by 60%.

According to Gartner’s 2023 Security Operations report, this method achieves a 55% decrease in false positives when compared to rule-based systems.

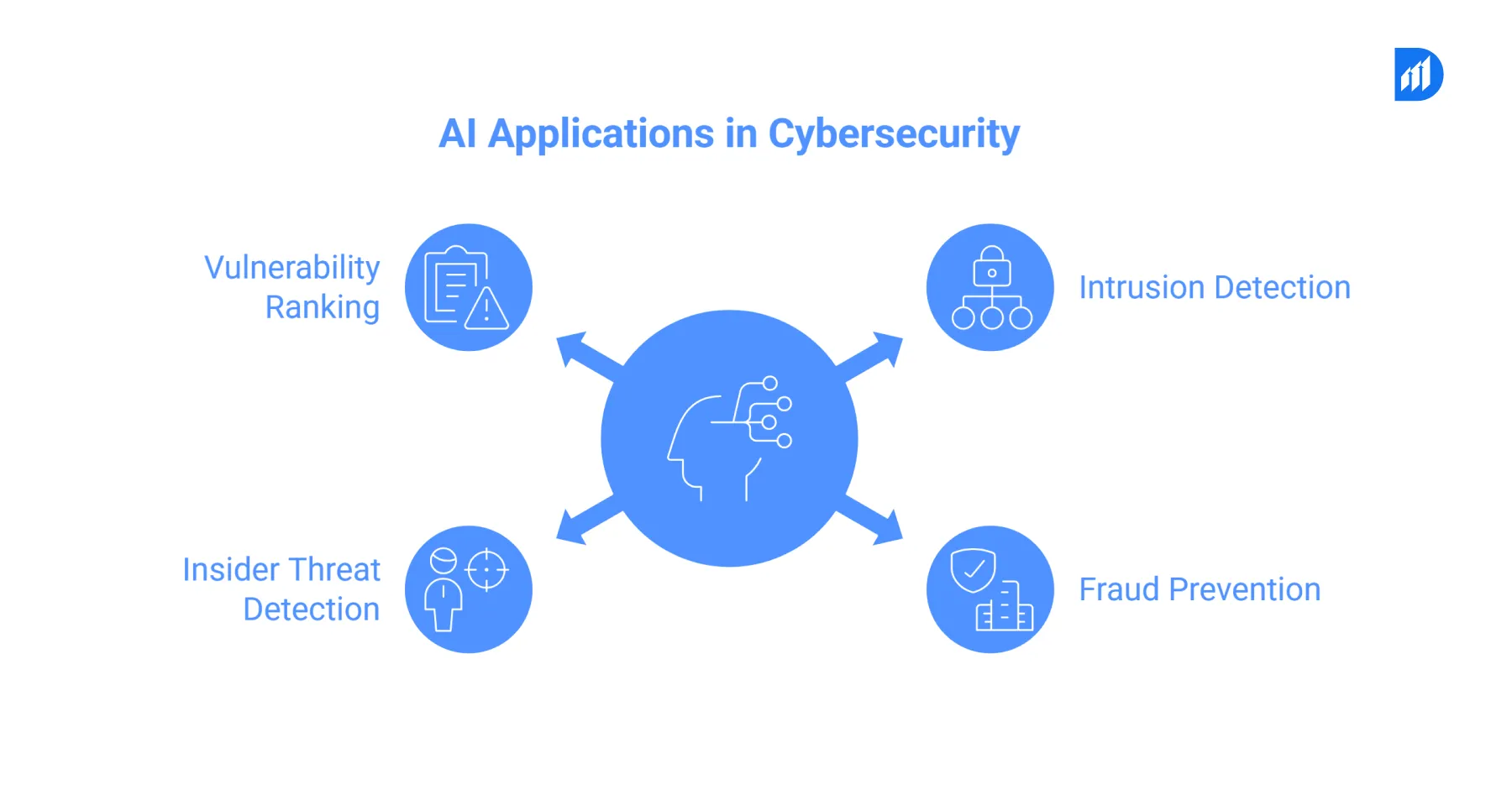

Core Use Cases of AI in Cybersecurity: Threat Detection, Fraud Prevention, and Incident Response Automation

These examples span various attack surfaces throughout their entire scope. The most impactful examples of AI in cybersecurity include the following applications. Each reflects a specific use of AI in cybersecurity to address real threats:

- Intrusion detection: AI systems use machine learning models that detect network traffic patterns to recognize port scanning activities and lateral movement and command-and-control communication.

- Fraud prevention: The use of AI systems by financial institutions results in a 40 to 60 percent reduction of fraudulent transaction losses. The models process multiple signals at once which exceeds the processing capabilities of rule engines.

- Insider threat detection: User Entity Behavior Analytics (UEBA) detects when employees access data outside their normal access patterns. For instance, the system triggers an alert when a finance analyst downloads 50,000 records at midnight.

- Vulnerability ranking: AI technology evaluates CVEs based on their actual risk in your environment instead of using CVSS scores alone. As a result, the team starts with the most critical tasks first.

The use of these AI tools across these areas delivers measurable ROI. According to Forrester Research, enterprises achieve a 3.05x return on their AI-driven security investments within 18 months.

AI-Powered Security Operations Centers (SOC): Enhancing SIEM and SOAR Capabilities

The security operations center of today relies on continuous data streams for its operations. The security analysts handle a daily workload which includes processing thousands of security incidents from their SIEM systems. Without AI, the typical organization requires one security analyst to monitor 1,500 security incidents per hour. However, AI changes that ratio dramatically.

How AI Transforms SOC Operations

The Azure Sentinel SIEM system uses machine learning to connect security events through its analysis of large data sets. Moreover, it shows only those incidents which require evaluation by human experts. The SOAR systems then handle the automated process which contains the threat. As a result, the organization requires only 8 seconds to isolate a compromised endpoint while its previous process took 8 hours.

The results show a clear impact. AI-driven security operations centers detect security threats 52% faster than traditional systems while their operational expenses decrease by 35%. The GIAC Security Essentials (GSEC) and Certified AI Security Professional (CAISP) certifications now train analysts for the job which combines machine intelligence with human skills.

Why SOC Analysts Still Matter in AI-Driven Environments

AI handles the volume. But human analysts still make final decisions on ambiguous threats. The combination of AI speed and analyst judgment is what makes a modern SOC effective. Neither works as well without the other.

Natural Language Processing (NLP) and AI for Phishing Detection and Malware Analysis

Phishing remains the entry point for 91% of cyberattacks. Therefore, AI uses Natural Language Processing models which analyze email elements together with sender actions and link trustworthiness and message content.

Microsoft Defender uses transformer-based NLP models to score phishing probability. These models detect spear-phishing attacks which use writing patterns and situational details to bypass standard security filters.

How AI Handles Zero-Day Malware

AI performs malware analysis through its combination of static analysis and dynamic analysis methods. Static analysis examines code structure without running the code. Dynamic analysis monitors behavior within a sandboxed testing environment. Together, these methods enable zero-day malware detection with 94% accuracy, compared to 62% for signature-based detection methods.

Additionally, Gen AI in cybersecurity adds another layer. Analysts now use large language models to produce natural-language summaries of malware behavior, which shortens incident report writing time from 45 minutes to under 5 minutes.

AI for Endpoint, Network, and Cloud Security Monitoring

All security signals come from every device and every packet and every cloud workload. The AI system processes these security signals at a speed that exceeds human capabilities.

- Endpoint security: CrowdStrike Falcon and SentinelOne use on-device ML models. These models identify ransomware activities through their detection of rapid file encryption processes and terminate the process within 100 milliseconds before it causes major damage.

- Network security: Darktrace uses AI-powered Network Detection and Response (NDR) tools to model normal traffic patterns. Furthermore, the system identifies encrypted malware traffic which traditional deep packet inspection techniques fail to detect.

- Cloud security: Advanced cybersecurity solutions use AI to monitor IAM role activity and API calls and storage access patterns at AWS, Azure, and GCP. The system detects misconfigurations which attackers use to launch 82% of cloud breaches before any breach occurs.

- Cyber security automation across all three layers creates a unified defense. When an attacker conducts a coordinated attack that targets endpoint and network and cloud systems, all three systems generate synchronized security alerts. This level of cyber security automation is what separates modern defenses from legacy approaches.

Deployment Framework for AI in Cybersecurity: Infrastructure, Data Strategy, and Model Governance

The deployment of advanced AI security systems requires organizations to complete multiple steps which go beyond basic software installation. A structured framework helps organizations avoid failure.

| Phase | Activity | Key Tools |

| Data Foundation | Centralize logs, normalize formats | Azure Log Analytics, Splunk |

| Model Development | Train on labeled threat data | Python, scikit-learn, Azure ML |

| Integration | Connect to SIEM/SOAR | Microsoft Sentinel, Palo Alto XSOAR |

| Monitoring | Track model drift, alert accuracy | MLflow, Databricks |

| Governance | Audit trails, explainability | SHAP, Azure Responsible AI |

Managing Model Drift Over Time

Model drift is a significant ongoing risk. Cybercriminals develop new attack methods regularly. Therefore, a model trained on last year’s threats will not identify new attack patterns. Continuous retraining pipelines which use Databricks MLflow help maintain model accuracy over time. Monthly retraining cycles reduce model performance loss by 67%.

Keeping AI Models Compliant During Deployment

Governance is not a one-time task. Teams must log every model decision and run regular bias checks throughout the model’s life. This keeps the deployment both effective and compliant.

Security, Compliance, and Regulatory Considerations (GDPR, HIPAA, ISO 27001)

AI systems process sensitive data. Compliance is non-negotiable.

GDPR requires clear explanations for automated decisions. As a result, AI security models must log why a user was flagged. SHAP (SHapley Additive exPlanations) values provide this at the individual decision level.

Similarly, HIPAA requires organizations to implement access controls and auditing for their healthcare data. All patient data AI platforms need to encrypt their logs and control model access through user roles.

ISO 27001 frameworks also align naturally with AI security operations. Research shows that organizations which achieve ISO 27001 certification experience a 40 percent reduction in successful cyberattacks every year. The development of advanced cybersecurity solutions which use compliance-first design as their foundation achieves two main benefits. The solution reduces audit preparation time by 60 percent while it enables organizations to avoid paying the average penalty cost of 4.4 million dollars for each GDPR violation.

Implementation Challenges: Data Quality, Model Drift, False Positives, and Ethical Risks

The tools deliver strong capabilities, yet their use creates actual difficulties for users. Teams encounter four main obstacles to their work.

- Data quality: The quality of training data determines how accurate AI models will perform. Unprocessed logs and incomplete logs create models that fail to deliver accurate results. At minimum, six months of clean, labeled security data is needed before effective model training can begin.

- Model drift: Attack patterns change weekly. Without monitoring, model performance declines. Therefore, teams must set drift alert limits which trigger automatic retraining.

- False positives: A 1% false positive rate generates 1,000 analyst interruptions every day across 100,000 daily events. However, the process of threshold tuning together with human backup systems has the ability to decrease these incidents over time.

- Bias risks: Behavioral AI systems can base their user identification on demographic characteristics rather than actual threat indicators. As a result, bias audits which use IBM OpenScale software must proceed before any system enters operational use.

Real-World Case Studies: AI-Driven Security in Action

Organizations that deploy AI-based systems in cybersecurity protection demonstrate their abilities through real-world implementation. Here are three concrete examples of AI in cybersecurity that show measurable results.

A European bank employed AI-powered fraud detection to track 2.3 billion transactions throughout its entire annual operations. The first year saw a 58% reduction in fraud losses. The company also achieved a 72% decrease in false positive results. Furthermore, the system generated enough revenue to cover its $4.2 million investment during a seven-month period.

A US hospital network implemented AI-powered healthcare data mining to enhance their security information and event management system. The system detected an insider data exfiltration attempt 18 days earlier than standard tools would have permitted, which safeguarded 840,000 patient records.

A global e-commerce platform employed generative AI to assess 14 million daily API access requests throughout its security operations framework. The number of bot-driven credential stuffing attacks decreased by 81% during the first three months after implementing these measures.

These three cybersecurity examples show three different patterns faster detection, lower investigation expenses, and fewer breaches. The best results for organizations occur when they treat it as their primary security system instead of an additional feature.

FAQs

What does AI mean for cybersecurity?

AI in cybersecurity uses machine learning and deep learning to detect threats faster than traditional systems. It automates protection and response, helping teams act in real time during digital disruption.

What are the main examples of AI in cybersecurity?

Key examples include intrusion detection, phishing detection, user behavior monitoring, malware analysis, and automated incident response. These systems continuously scan patterns and flag anomalies before they escalate.

How does cybersecurity automation work with AI?

Through SOAR platforms, cyber security automation handles alert triage, threat scoring, and incident containment. This reduces response time from hours to seconds. Additionally, cyber security automation frees analysts to focus on higher-priority investigations.

What is Generative AI used for in cybersecurity?

Gen AI in cybersecurity helps simulate attacks for red team exercises and strengthens testing environments. It also generates incident reports and threat summaries to reduce manual effort. More broadly, gen AI in cybersecurity is being used to accelerate threat research and automate documentation tasks.

What AI security certifications should professionals consider?

Top AI security certifications include GIAC Security Essentials (GSEC) and Certified AI Security Professional (CAISP). Vendor credentials from Microsoft, AWS, and Google Cloud are also highly valued. These AI security certifications are a strong foundation for any security professional working with AI systems.

What is the use of AI in cybersecurity for cloud security?

The use of AI in cybersecurity for cloud environments includes monitoring IAM activity, detecting misconfigurations, and identifying unusual API call patterns before breaches occur. Beyond cloud, these same capabilities extend to endpoint protection and network monitoring as well.

Durapid Technologies enables enterprises to implement advanced cybersecurity solutions through AI which operates in their cloud and endpoint and Security Operations Center environments. The company creates scalable security intelligence through its team of 120 certified cloud consultants and 95 certified Databricks professionals.