Agent Chaining 101: How Multi-Agent Systems Outperform Single Workflows

Your last AI project failed in production. Not because the model was bad. Not because the data was wrong. The project collapsed because one system received excessive work responsibilities. The failure in healthcare software development brings higher costs than time. It leads to loss of trust, affects compliance, and puts patient safety at risk. Organizations that implement modular multi-agent AI systems achieve three times better task completion rates than those using single-agent systems, according to McKinsey research. This blog breaks down how agent chaining works, why it outperforms single workflows, and how industries are deploying it at scale. Most AI workflows today still rely on one system doing everything.

The system operates correctly until it encounters problems. Single-agent systems start breaking down when complex situations arise. This includes scaling healthcare software development, running operations, and making real-time decisions. The transition to multi-agent systems begins here. Multiple agents work together because each has its own specialization. They complete specific duties while sharing information to enhance overall performance. This is what we call agent chaining.

From AI customer service agents handling layered queries to agentic AI in supply chain optimizing logistics in real time, businesses are moving toward systems that collaborate instead of operate in isolation. This has progressed beyond being a trial. OpenAI agents and advanced agentic AI use cases have become widely used. And if you’ve been exploring solutions from a Top 10 Software Development company, you’ve probably already seen this transition happening. This blog explains how agent chaining operates, why single workflows reach their limits, and how multi-agent systems prove superior in manufacturing and enterprise healthcare software development built by top software development companies.

What Is Agent Chaining and Why Does It Matter?

The process of agent chaining connects multiple AI agents through sequential or parallel connections. It uses the output from one agent as input for the next. AI tasks function like an assembly line that processes all tasks through multiple stages. The system uses specialized agents to complete each task. Instead of allowing one agent to perform all research, summarization, decision-making, and action execution, each function goes to a dedicated agent. LLMs require context windows to operate. The system reaches its performance limit when one agent needs to handle a complete complex workflow. Chaining distributes cognitive load across agents. This makes the system function better at higher speeds, with improved accuracy, along with simpler debugging. The architecture in healthcare software development enables separate agents to perform patient intake, insurance verification, diagnosis support, and documentation tasks simultaneously without creating bottlenecks.

How Multi-Agent LLM Systems Are Structured?

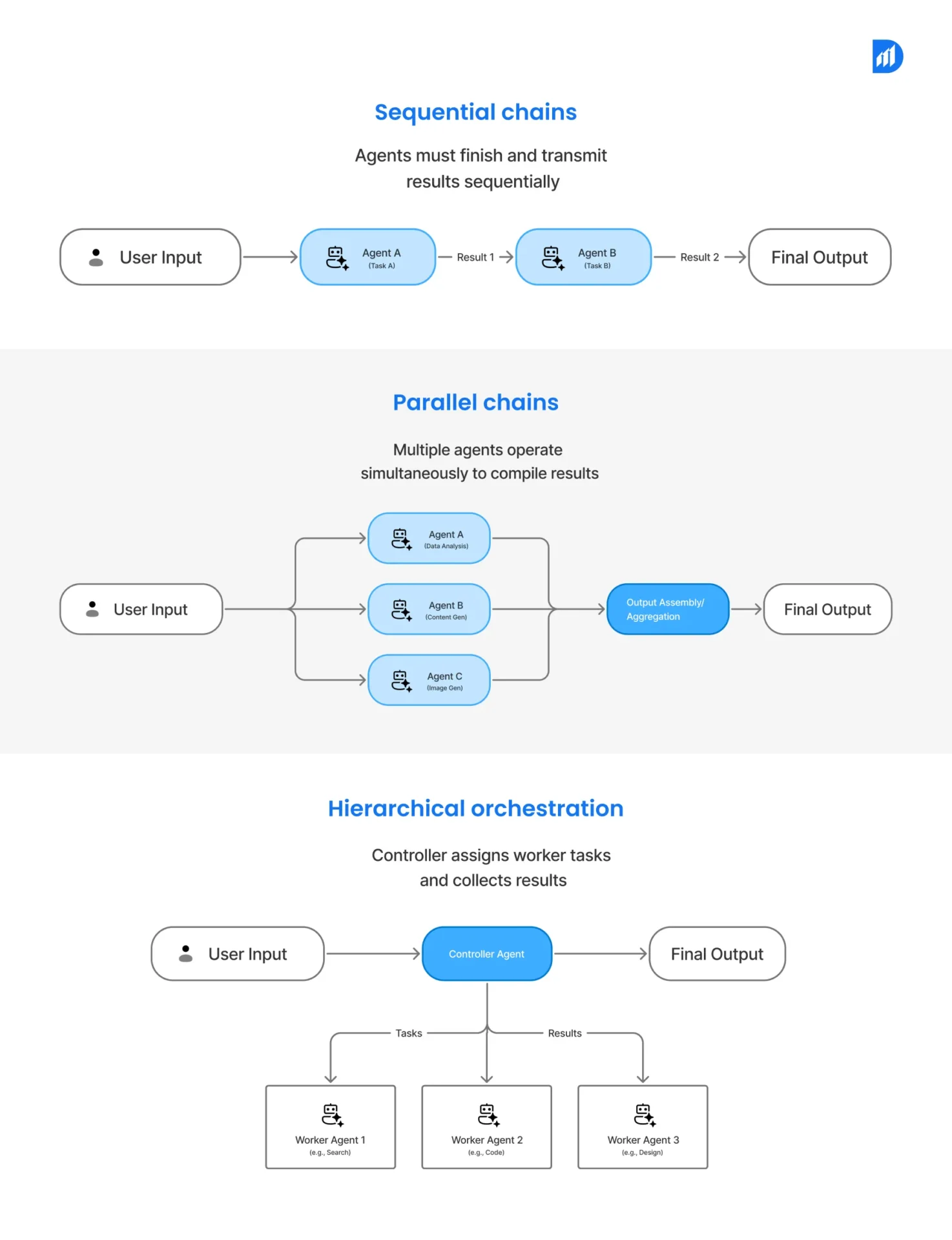

Multi-agent LLM systems operate according to three distinct patterns sequential chains, parallel chains, and hierarchical orchestration. The sequential chain requires agents to finish their tasks before transmitting results to the next stage. The parallel chain structure enables multiple agents to operate simultaneously while they compile output results. The hierarchical orchestration system uses a controller agent to assign worker agents with specific tasks while it collects their results. The hybrid model serves as the primary deployment method for most enterprise systems. The breakdown of agent chaining by agent.ai shows that production systems use multiple patterns rather than one definite approach. The active work process uses both sequential handoffs and parallel execution depending on the required task.

The standard architecture consists of a planner agent, retrieval agents connected to vector databases or APIs, execution agents, and a validation layer before the final output. The system uses LangGraph, AutoGen, and CrewAI as its main tools to establish connections between components.

Why Single-Agent Workflows Hit a Ceiling?

Single-agent setups work effectively with simple, isolated tasks. The system fails when it encounters complexity. The core problem is that one agent cannot maintain deep context, call multiple tools reliably, and generate structured output simultaneously without degrading in performance. Research from Stanford’s AI Lab shows that single-agent systems handling tasks with more than 5 interdependent steps experience a 42% drop in accuracy. That drop increases throughout healthcare software development because each step depends on previous work. Mistakes at one stage create downstream risks.

Single agents also fail at specialization. A general-purpose agent will perform poorly when prompted to analyze lab results and draft a care summary. Two specialized agents, one for analysis and one for drafting, will do both well.

Why Multi-Agent LLM Systems Fail (And How to Avoid It)

Multi-agent systems fail for predictable reasons. The handoff layer is where most failures occur.

Common failure modes include context loss between agents, where Agent B doesn’t receive complete context from Agent A. Conflicting instructions are another issue, where two agents receive overlapping prompts that produce contradictory outputs. Latency cascades happen when one slow agent blocks the full chain. No fallback logic means the entire system crashes when one agent fails.

The fix is defensive architecture. Build structured outputs at each handoff point. Use JSON schemas to standardize what each agent passes forward. Add retry logic and fallback agents at critical nodes. Monitor with tools like LangSmith or Weights and Biases to catch failures early. Companies that implement structured handoffs and monitoring reduce multi-agent failure rates by 60% compared to unmonitored chains, according to Gartner (2024).

Agentic AI Use Cases Across Industries

Agentic AI use cases span every sector where decisions happen in sequences. Financial services use agents to conduct fraud detection, compliance verification, and customer communication through a sequential pipeline. In manufacturing, agents monitor production data, flag anomalies, and trigger maintenance requests without human intervention. Durapid’s work with AI in manufacturing shows measurable gains in predictive maintenance accuracy. Educational institutions use agent chains to manage student onboarding, track progress, and deliver personalized content. The energy sector uses agents to process sensor data, cross-reference usage patterns, and optimize grid load distribution in near real-time. Each workflow here shares one trait: no single agent could handle the full process reliably at scale.

Agentic AI in Supply Chain: A Practical Breakdown

The market for agentic AI in supply chain is growing fast. Its global value will reach $21 billion by 2028, according to IDC (2024). A chained supply chain system typically includes a demand forecasting agent, an inventory management agent, a supplier communication agent, and a logistics routing agent. Each feeds into the next. When demand spikes, the forecasting agent triggers the inventory system, which contacts suppliers to update routing automatically. This setup decreases manual work requirements by 70% while cutting order fulfillment errors by 45% for high-volume distribution centers, according to Deloitte’s 2023 Supply Chain Report. In mature deployments, AI agents in supply chain reduce disruption response times from 48 hours to under 90 minutes.

AI Customer Service Agents: Multi-Agent Done Right

AI customer service agents work best when components function as a unified system. When one agent handles intake, resolution, escalation, and follow-up, it creates delays. It also reduces first-contact resolution rates.

A multi-agent system uses an intake agent to classify the query, a knowledge retrieval agent to pull relevant answers, a resolution agent to draft the response, and a quality agent to review before sending.

This model produces a 28% increase in customer satisfaction scores alongside a 35% decrease in handling time, as shown in Salesforce’s State of Service report (2024). The system provides continuous operational coverage because it prevents human fatigue from affecting output quality.

Real-World Multi-Agent Deployments You Should Know

Agentic AI Pindrop and Anonybit use multi-agent architectures for identity verification and fraud detection. Each agent handles a specific signal: voice, biometric, and behavioral data. A controller agent then combines all results to make the final decision. This achieves 38% fewer false positives compared to single-model approaches.

OpenAI Agents SDK, released in early 2025, gives developers a native framework for building and chaining agents with built-in handoff protocols and tool-use management. It makes production-grade agent chaining more accessible for teams building on GPT-4o.

Immigration agents used AI to write use-of-force reports in some government deployments. This use has raised significant ethical concerns around transparency and accountability in automated decision-making.

How to Build an Agent Chain That Actually Works

Building a working agent chain requires four things: clear task decomposition, structured data contracts, observability, and fallback logic.

Begin by creating a complete map of all processes in your workflow. Identify which steps need reasoning, which need retrieval, and which need execution. Each stage gets its own dedicated agent. Define the input and output schema for every agent before writing the first prompt. Connect the chain through LangGraph or AutoGen. Add logging at every node and build a fallback response for each failure state.

For teams in healthcare software development, this process also requires HIPAA-compliant data handling at every agent boundary. Durapid’s software development company practice builds agent chains with compliance layers baked into the architecture, not bolted on after.

The Future of Agentic AI: What’s Coming Next

Agentic AI is moving toward self-improving chains. These are agents that can evaluate their own outputs, request clarification from other agents, and restructure the chain based on performance feedback. OpenAI’s research on “agentic loops” and Google DeepMind’s work on multi-agent reinforcement learning both point in this direction.

By 2027, Gartner predicts that 40% of enterprise AI deployments will use multi-agent architectures as a default. Companies building infrastructure now through platforms, tooling, and internal expertise will hold a compounding advantage over those still running single-agent pilots. Durapid’s top 10 software development resources and its AI marketing agents create practical entry points for teams building production-ready agent systems.

FAQs

What is agent chaining in AI?

Agent chaining connects multiple AI agents in sequence, where each agent completes one task and passes its output to the next, enabling complex workflows no single agent can handle alone.

Why do multi-agent LLM systems fail?

Most failures happen at handoffs, where context is lost or conflicting instructions are passed. Structured output schemas and monitoring tools reduce failure rates significantly.

How is agentic AI used in supply chain?

Agentic AI in supply chain automates demand forecasting, inventory management, supplier communication, and logistics routing, reducing fulfillment errors by up to 45% in high-volume environments.

What makes multi-agent systems better for healthcare software development?

Healthcare workflows involve strict sequencing and compliance requirements. Multi-agent systems assign specialized agents to each step, improving accuracy and enabling HIPAA-compliant data handling at every layer.

Which tools are commonly used to build agent chains?

LangGraph, AutoGen, CrewAI, and OpenAI Agents SDK are the most widely used frameworks for building, orchestrating, and monitoring multi-agent LLM pipelines in production.